Lunar Autonomy Challenge

Competition to create an Autonomously Mapping Lunar Rover

The following post details my experience working on the Lunar Autonomy Challenge, which eventually became the basis for my thesis. Much of this is detailed in it, and can be found at the link above. This reading offers a bit more of my personal point of view.

Beginning at the end of 2024, I founded and led a team within my lab (the Human Centered-Autonomy Lab) for the Lunar Autonomy Challenge (LAC), a competition created by the collaboration between NASA, Caterpillar, Johns Hopkins University, and Embodied AI. This was the first time NASA ran this competition. Most of my labmates informed me this wouldn’t be their main focus for the future, so I ended up developing nearly the entire solution. That said, most all of my teammates lent me their ears and thoughts at many times throughout this process. I am forever grateful and appreciative for their guidance and input.

The LAC challenged teams across the U.S. to simulate a lunar excavator mobile robot, the ISRU Pilot Excavator (IPEx), autonomously exploring the surface of the moon and mapping elevation in an area around a lunar lander. This would be achieved by focusing on solutions for navigation, mapping, and localization.

As a rough timeline, our team developed a plan in November of 2024. I worked on it until the qualification deadline at the end of February of 2025. I was unable to complete a submittable solution at this point. From that time until mid-July 2025, I made small amounts of progress. I was not sure whether I wanted to pursue this subject more or research some other topics I was looking at during that time. Eventually, I chose to continue working on a solution and use the work for my thesis in order to graduate in the fall of 2025.

This project allowed me to explore and improve my understanding of many areas inherent to exploration rovers. I dedicated hours researching topics novel to me from the ground up. I gained hands on experience in:

- Path planning techniques for autonomous navigation

- Object detection and avoidance algorithms, especially using grid map data

- Computer vision algorithms to generate a point cloud from a stereo camera pair

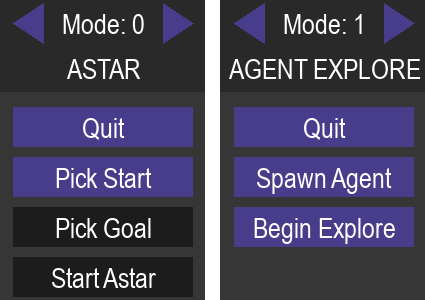

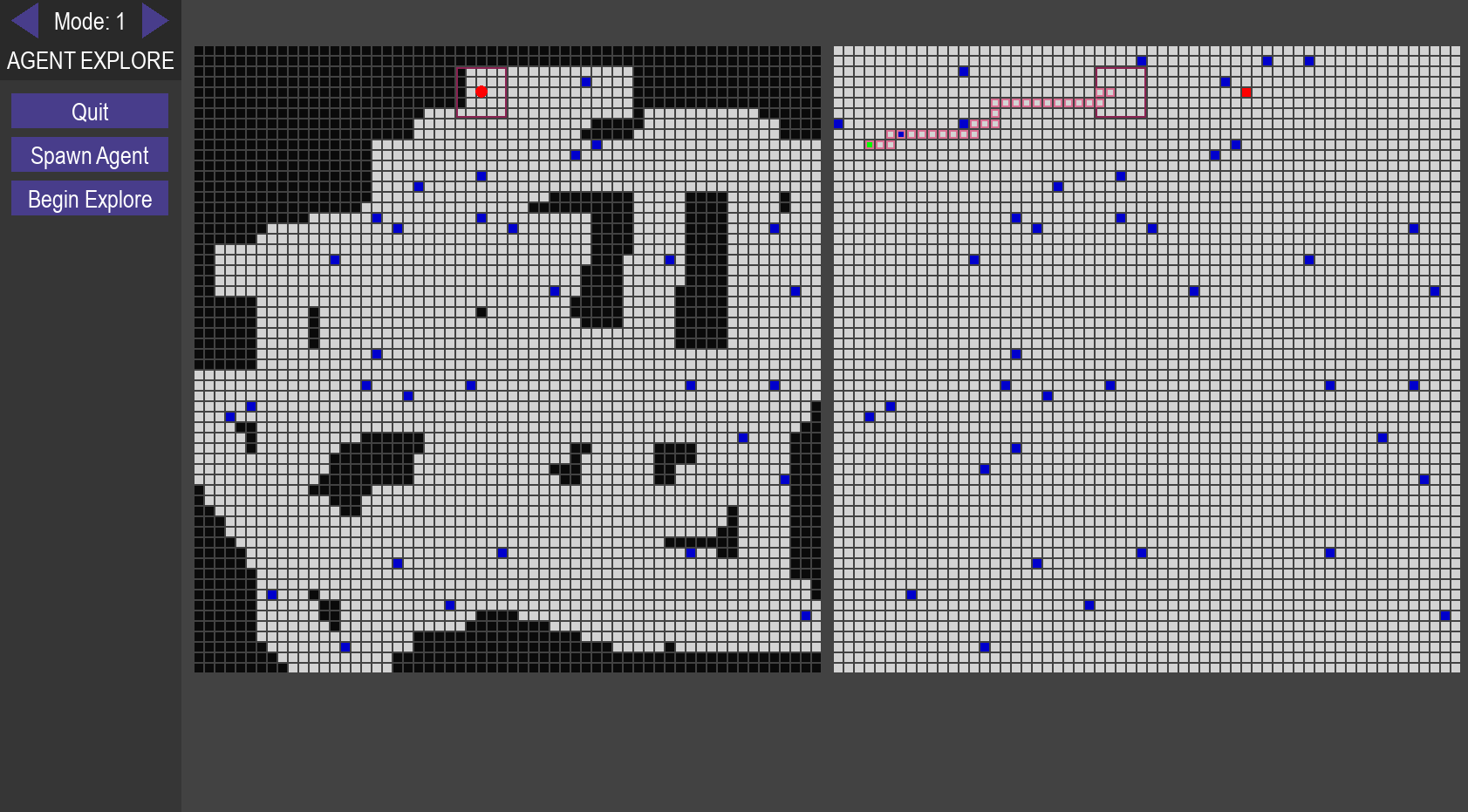

- Using PyGame to create small applications, like our 2D navigation simulator

- Creating and reading Dockerfiles and Docker environments

- Creating scripts to display, debug, and animate grid map data collected

- Odometry solutions and packages, specifical visual-inertial odometry ones

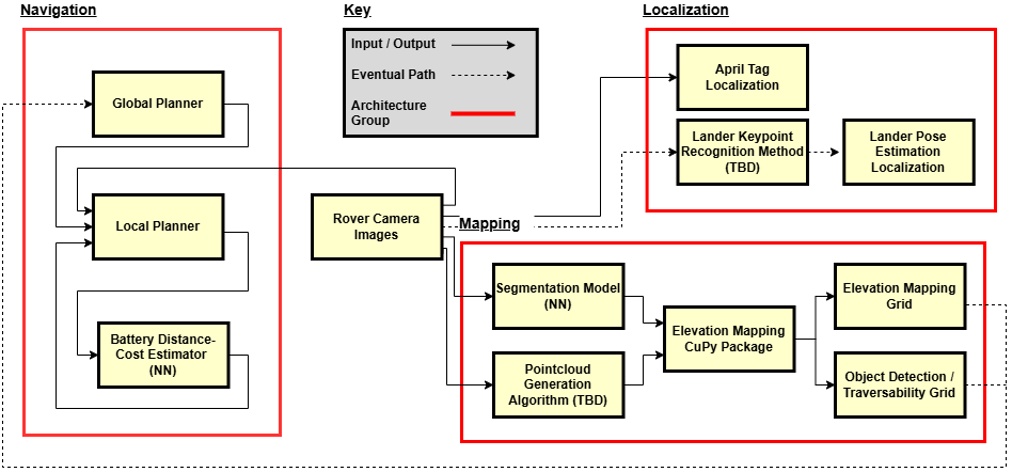

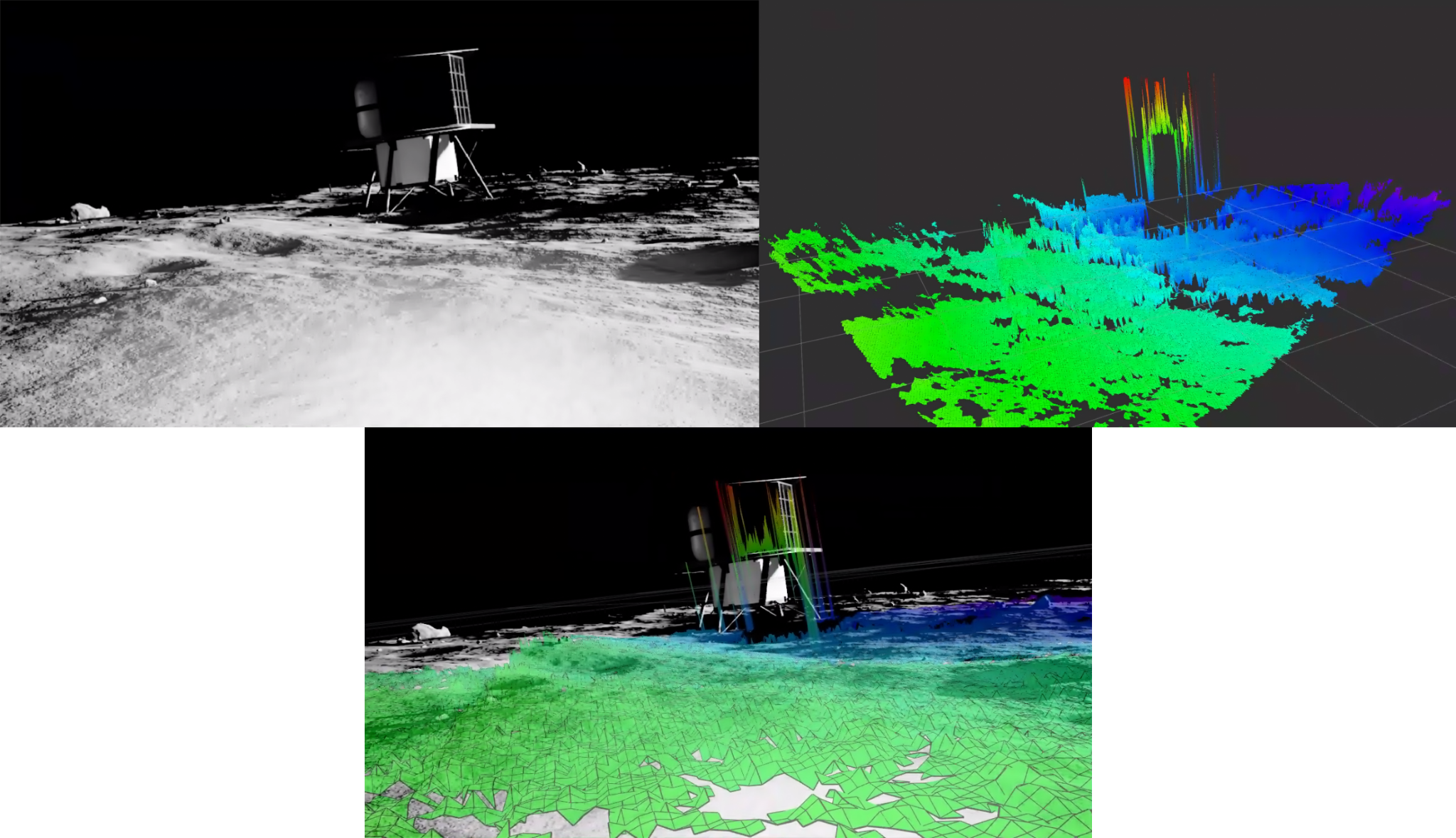

The figure below shows the initial approach. We would use the software package called “Elevation Mapping CuPy” in order to map the terrain. It requires a point cloud of the surrounding area as well as ROS-style coordinate frames for localization. We also wanted to use a segmentation model with the mapping data in order to properly identify obstacles. This data would be used in the navigation stack, consisting of a global planner optimizing for unexplored areas of the terrain and a local planner for obstacle avoidance. You’ll note we wanted to use another neural network that estimated our battery usage given a planned path. This ended up not being important for the qualifier round as no battery recharging was necessary for the limited mission. For localization, we relied on April tags attached to the central lunar lander, however we wanted to develop a different method down the line using keypoints in order to gain bonus points.

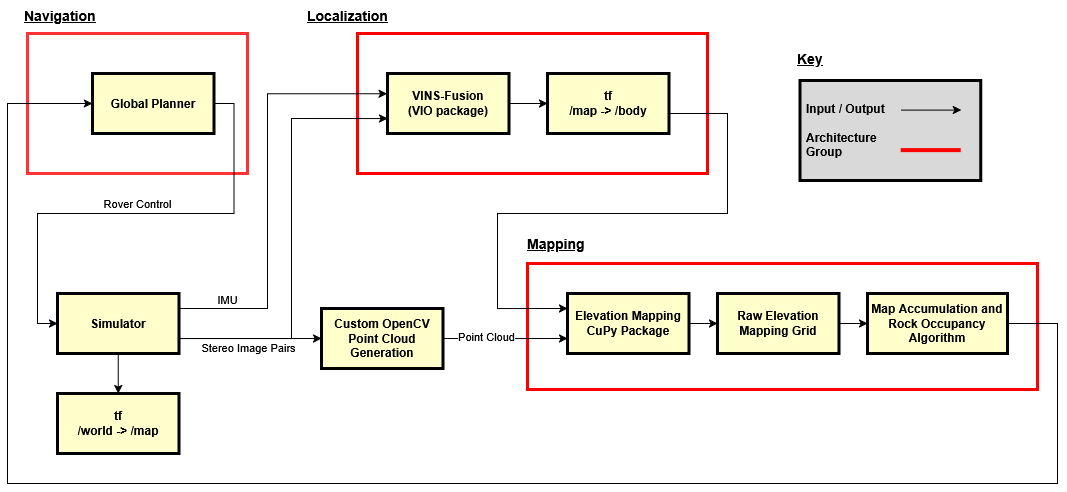

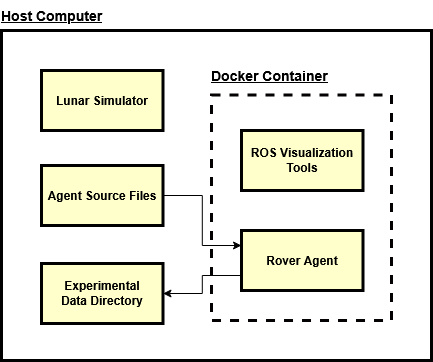

After some initial development, I realized after discussing with my teammates that some things would have to change. I also cut down on the scope of the project. A lot of this work was new to me, and I knew from past projects one of the important goals at this stage is to just get some solution working, then improve on it from there. So, with that in mind, I came up with the following software architecture. The architecture was not changed after this point.

With this new plan in hand, I began developing our code.

After a couple months of work, we had pieces of systems, but had a difficult time integrating everything. Also, we found our stereo to point cloud pipeline was providing subpar results, affecting our mapping. We cobbled together a minimum working solution. Ultimately though, the team was unable to submit anything for the qualifying round, hence ending our attempt at competing.

As the team lead, I thought much about how this happened after the fact, a post-mortem of sorts. The reality is I needed to reach out for assistance sooner. I will spare the nitty-gritty details, but I could have done a better job communicating with the competition staff about submitting our solution. They required our agent to be submitted via Docker, which makes complete sense for an asynchronous, simulation-based competition. However, we were also using Docker for our solution, and we ran into error after error when trying to combine with LAC’s Docker submission process. I eventually asked the staff for guidance and their response was very helpful and accommodating. But, it all happened too late and close to the deadline to submit. I definitely learned that there’s no harm in reaching out for a bit of help, and to also have a plan for what platforms we would be developing our code on. Our initial solution required Docker due to Ubuntu and Python version mismatches, so I should have been using LAC’s Dockerfile setups from the get-go. All that said, this was not the end of our solution package. I wanted to continue even after our competition run ended in order to get our algorithm running in some capacity. I find the idea of utilizing machine learning for an exploratory rover very interesting, and wanted to see this through.

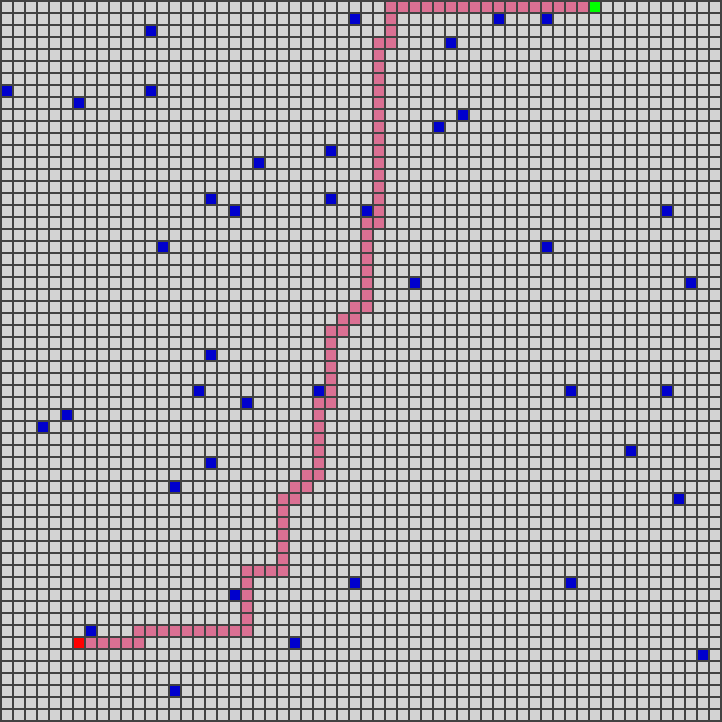

The first thing I did after failing to meet the qualification deadline was fix our Docker setup. The below picture is a visualization of the new setup for use with the lunar simulator and the team’s source code.

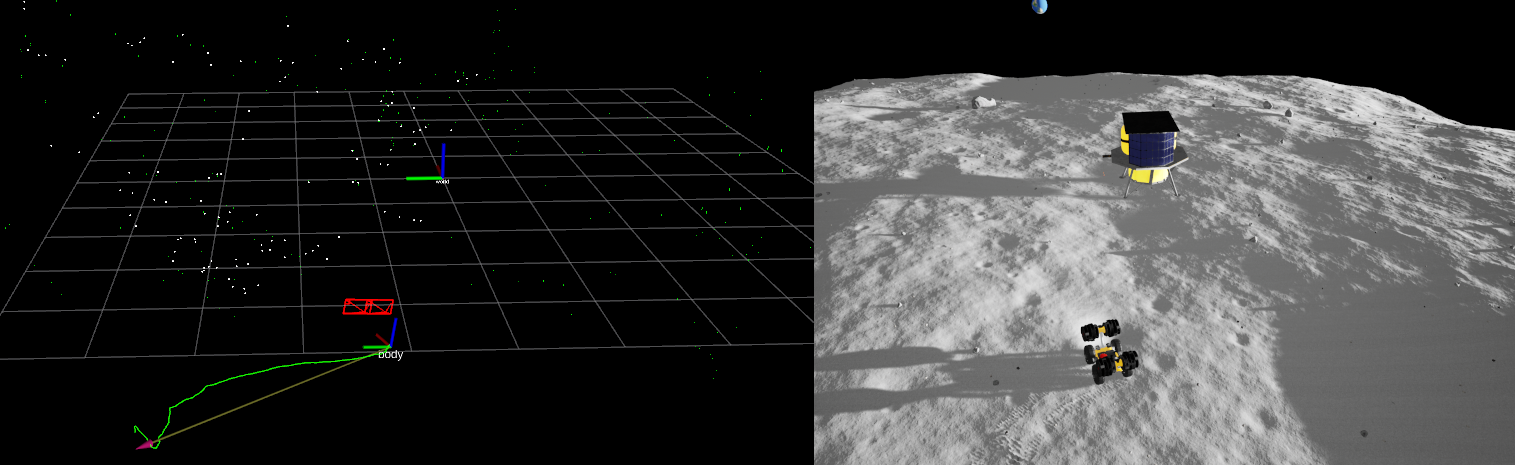

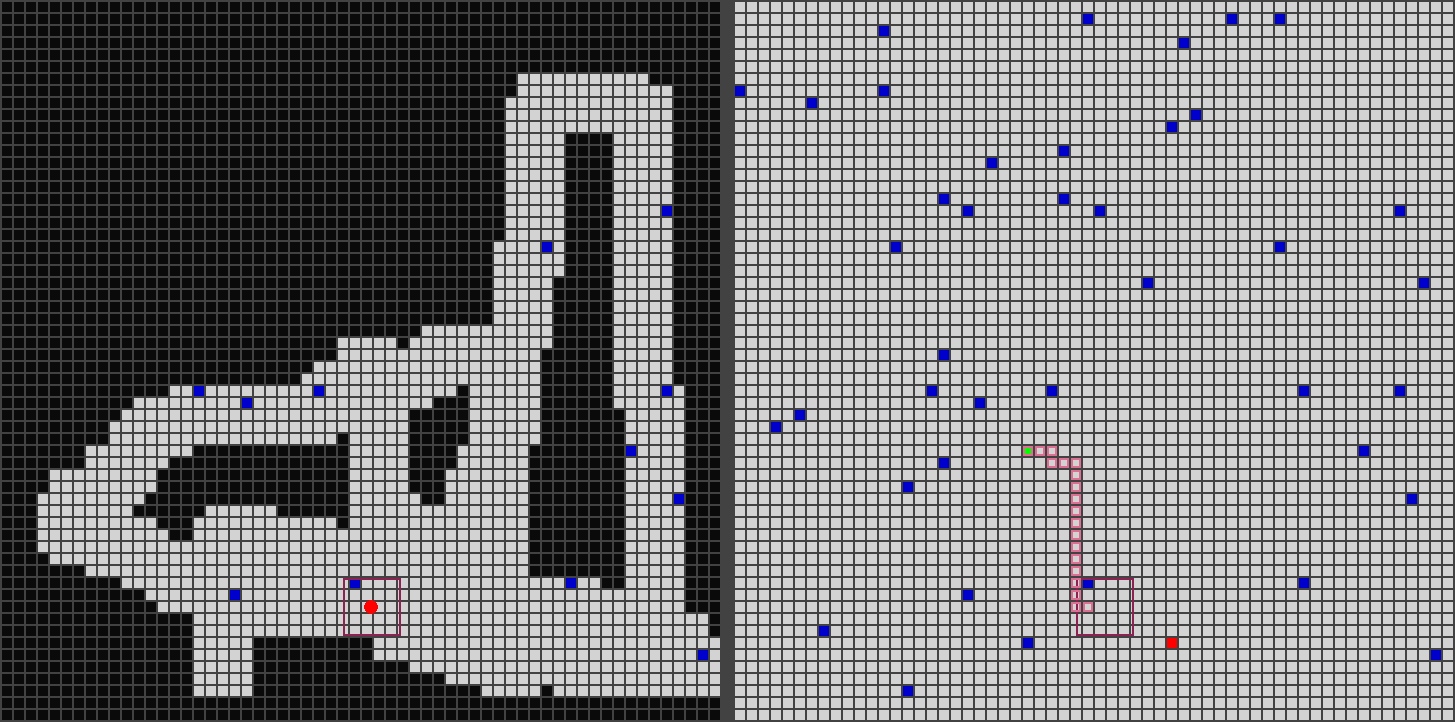

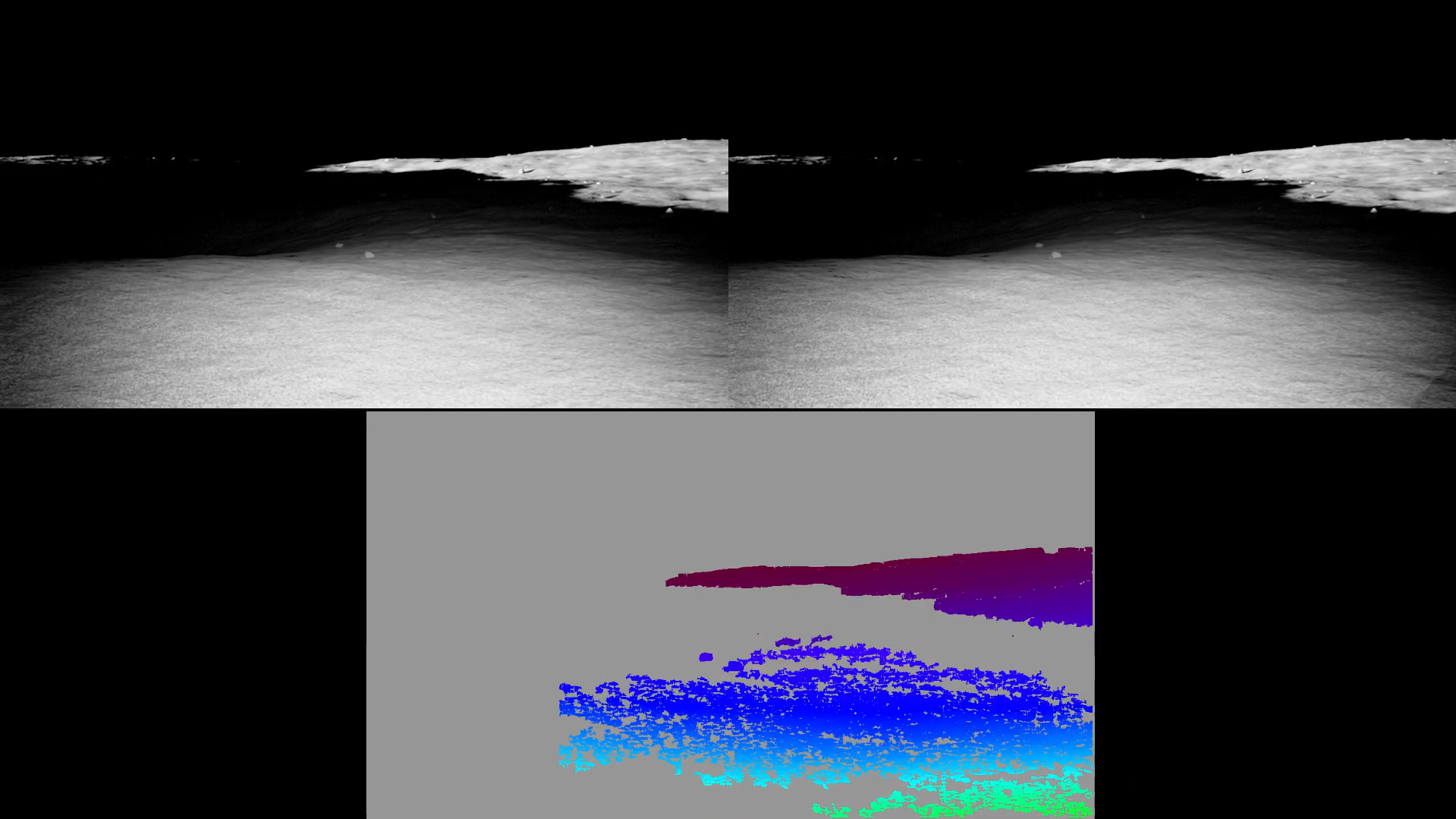

I also explored more point cloud generation techniques in order to have more control over the input into our mapping package. Ideally, an improved point cloud would yield more mapping data. I started by trying to tune the parameters provided from ROS.

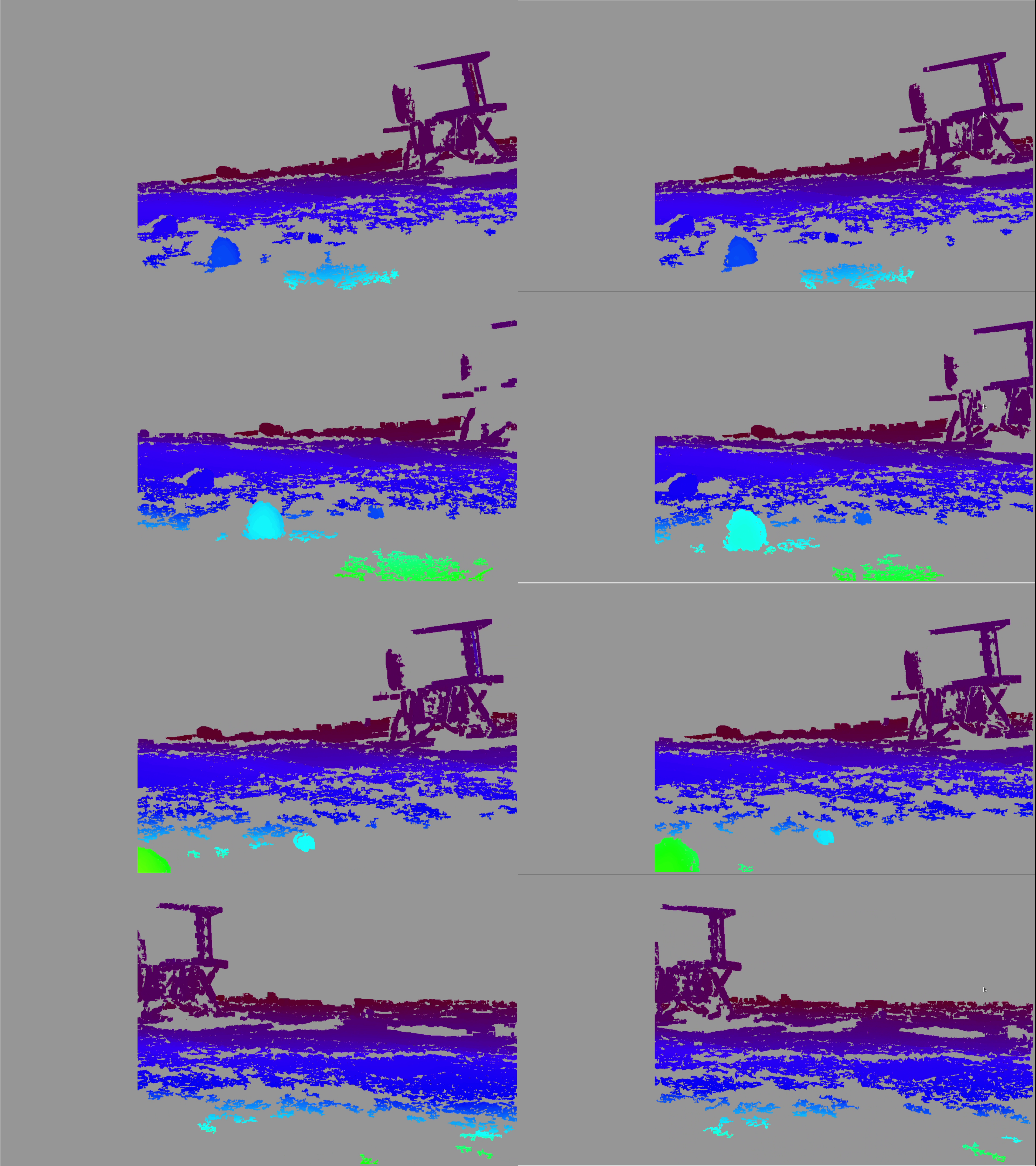

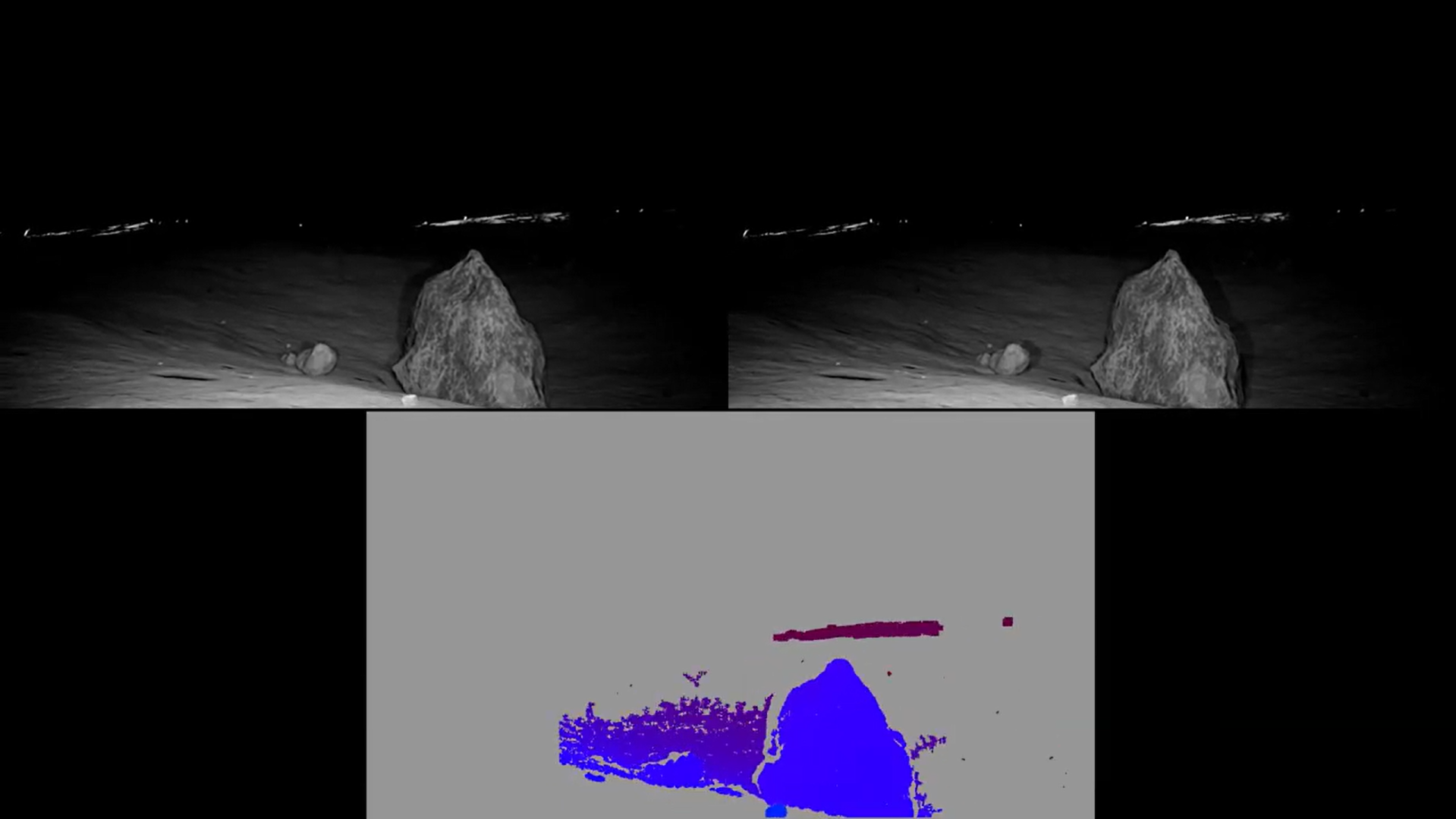

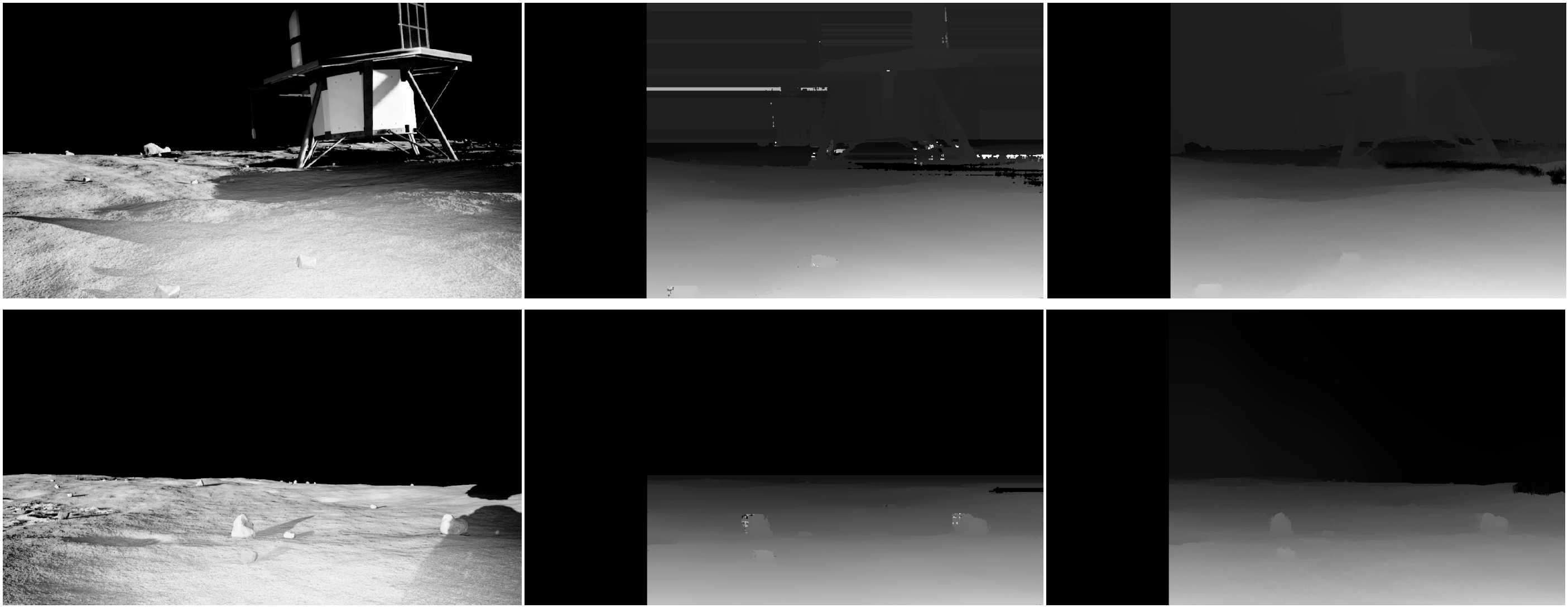

This process did not improve the point clouds very much. Part of the issue here touches on problems with the lunar environment itself. Low light levels, long shadows, and terrain that is hard to differentiate all culminate in errors in or lack of stereo matching (part of the process of turning a stereo pair into a 3D point cloud). Now this output is not actually too terrible. It is fairly dense with some voids here and there. However, note that the lunar lander, a part of the theoretical mission of this competition, is in view. This creates many distinct points that drastically helps stereo matching. When it is not in view, the disparity maps end up looking like the following:

This output is not necessarily a bad thing. The colored disparity map shows data for stereo matches that have a high confidence level. My goal was for the output to be a dense map, in order to create a smooth point cloud, which in turn provides continuous grid mapping data. My hope was more data would improve the overall solution of the autonomous agent.

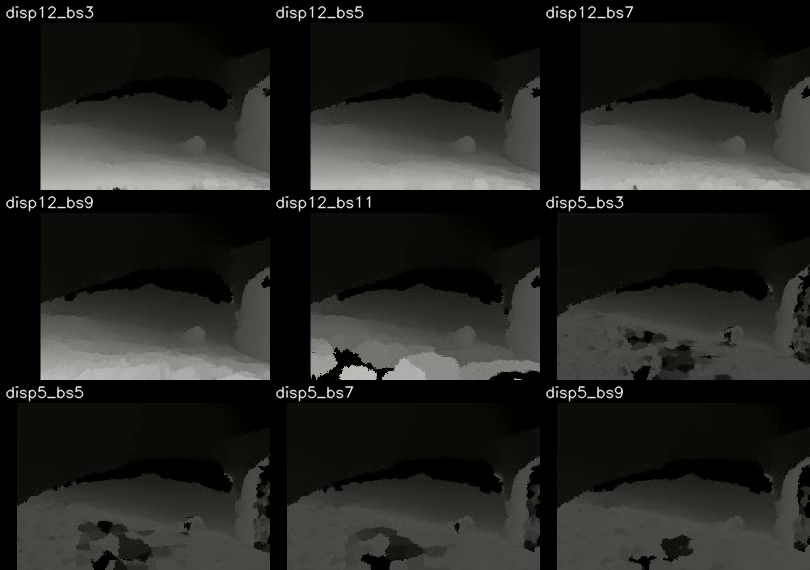

I ended up solving this problem by using a different algorithm to produce a disparity map: semi-global block matching. This method also uses a smoothing filter, which helps create a dense map.

This technique produces a fairly smooth disparity map, but it has a large compute time. This was fine for the competition however, as it was simulation only. We were given limits on real time vs compute time.

Also at this time, I started develop my own tools and scripts in order to compare different sets of parameters and create videos from data. After tuning, I had my finished stereo pair to point cloud pipeline. Since this was a bit of a custom process, I had to create my own ROS node to make this output.

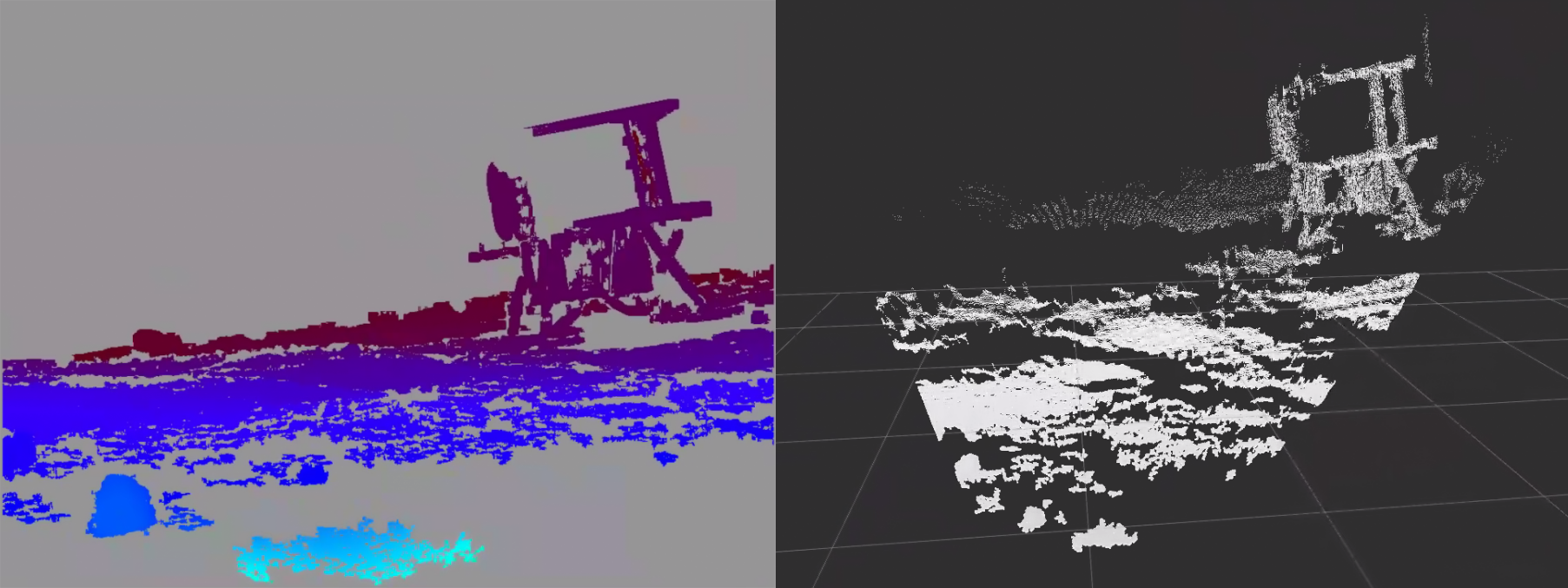

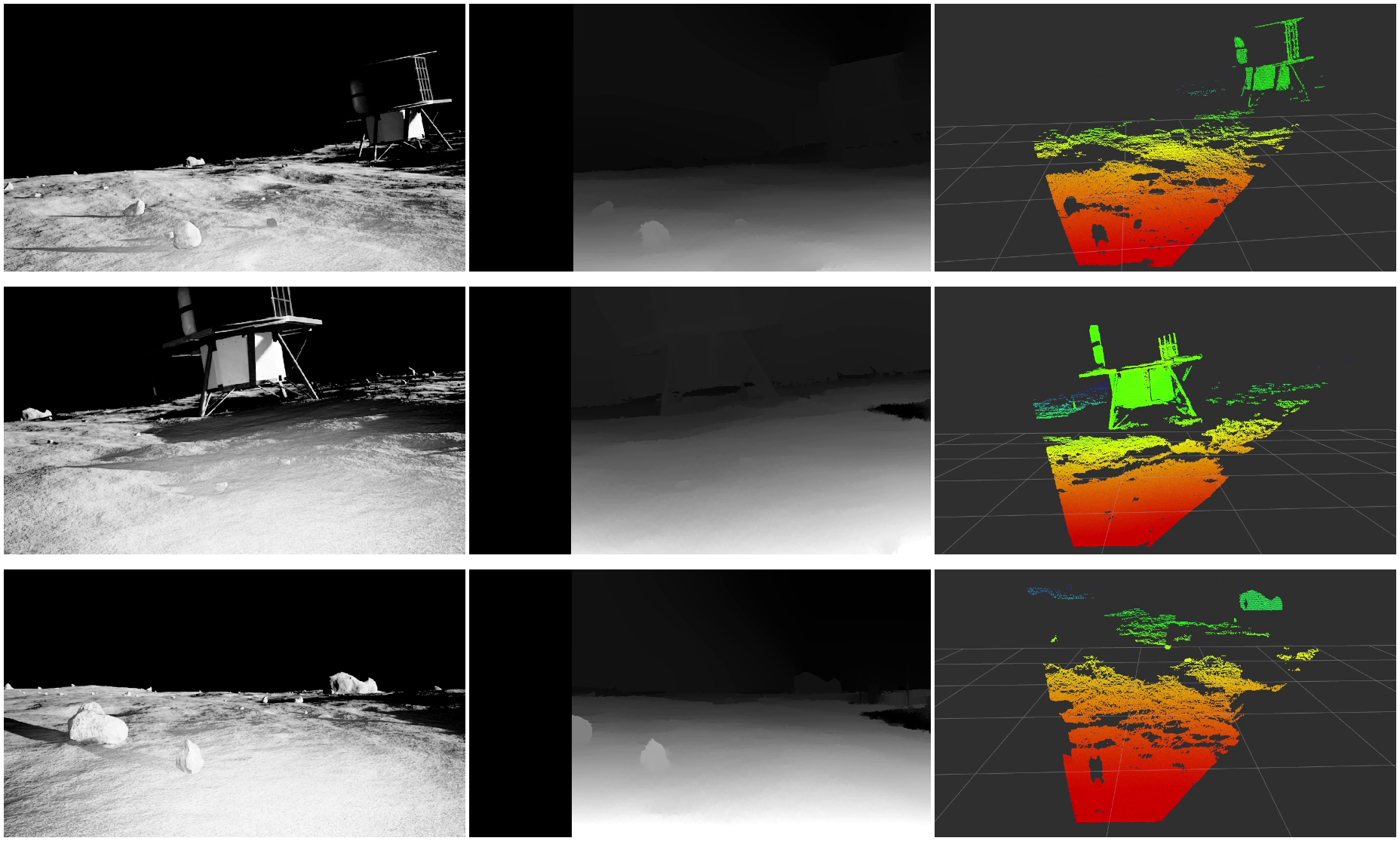

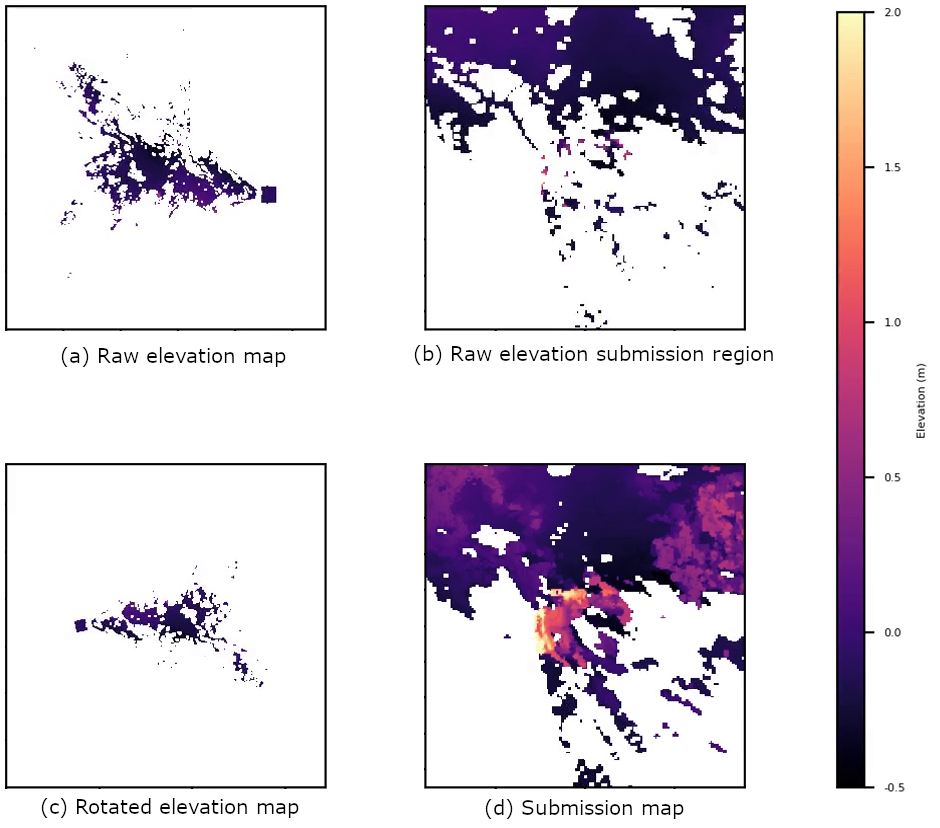

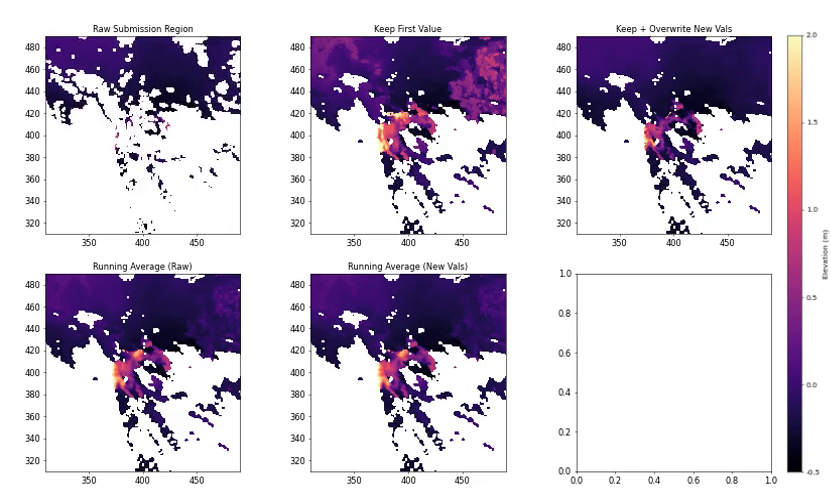

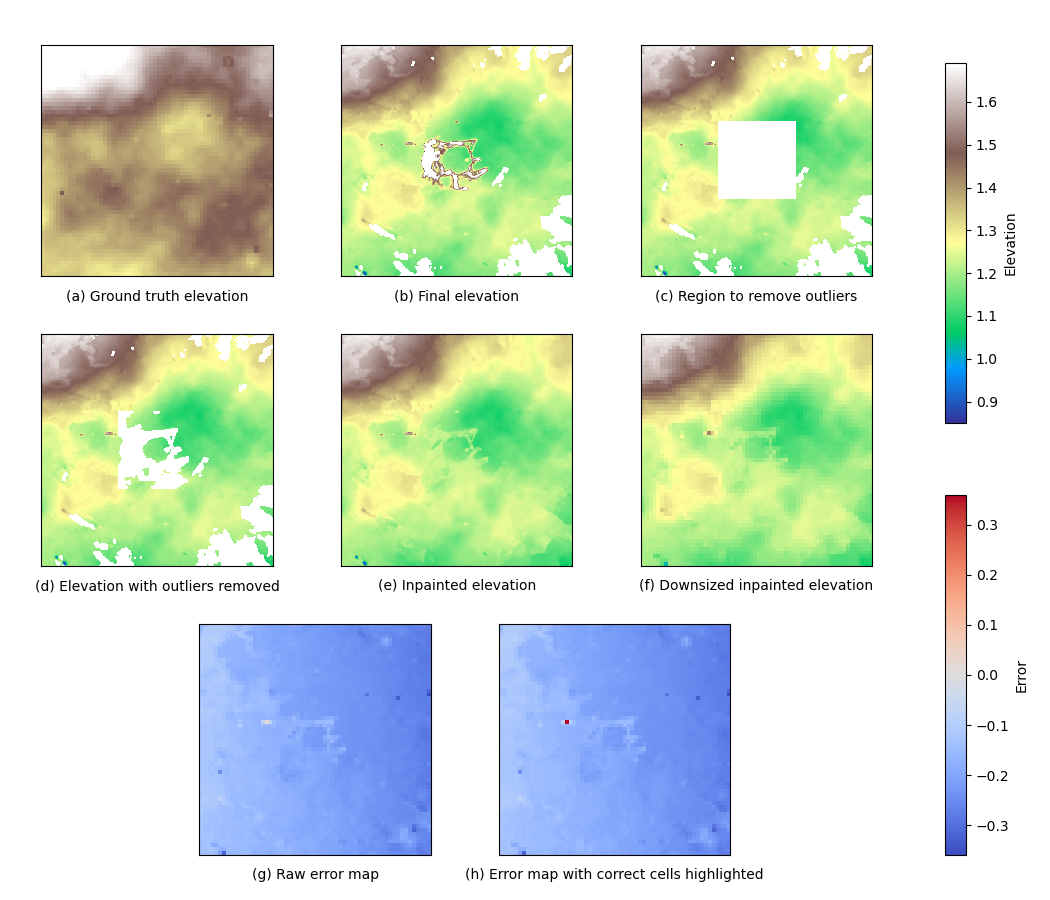

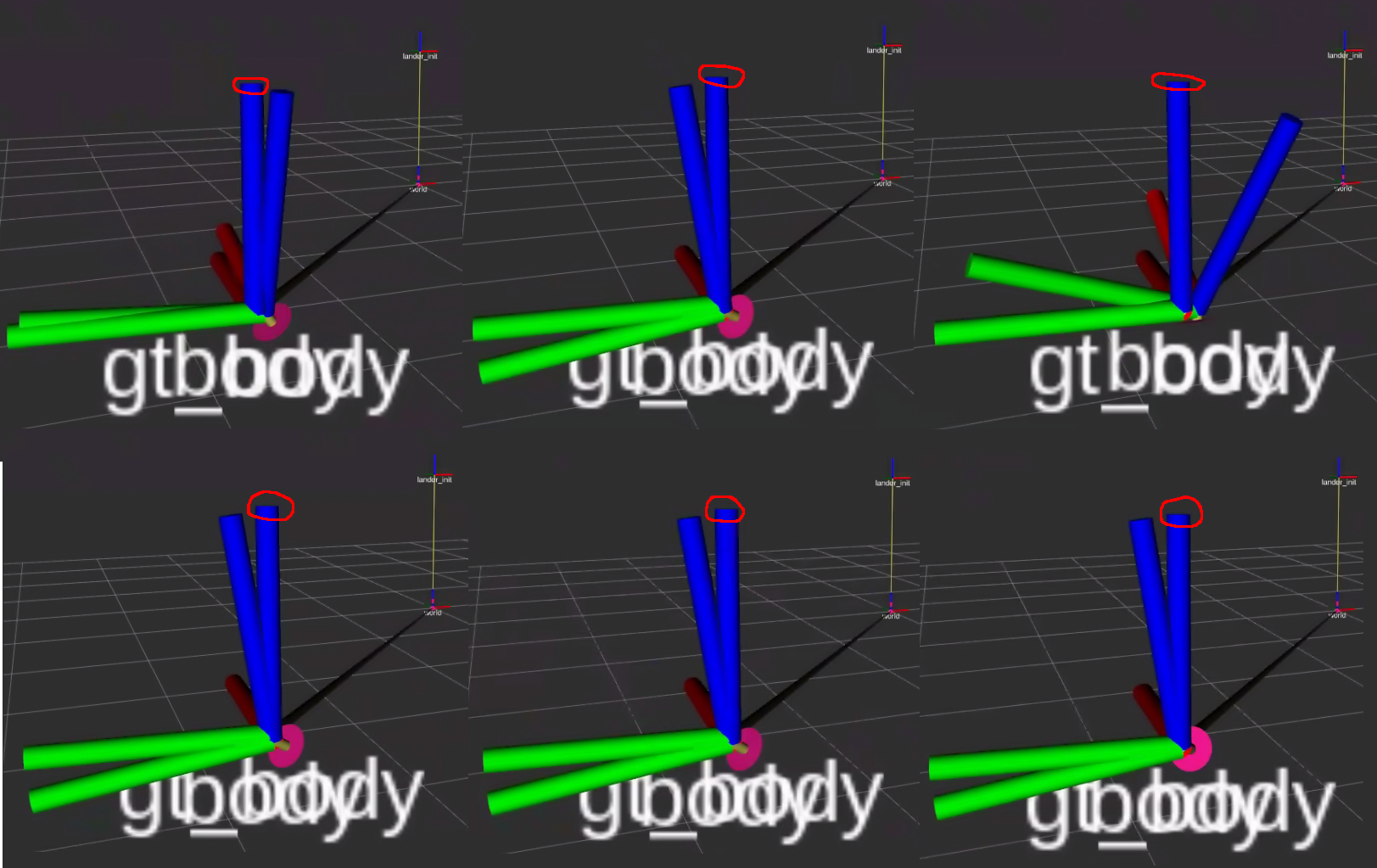

Now, I started experimenting more with the elevation data I was receiving. Skipping some details here, but the below images show my general process. I had fun managing this data and developing these scripts to create plots.

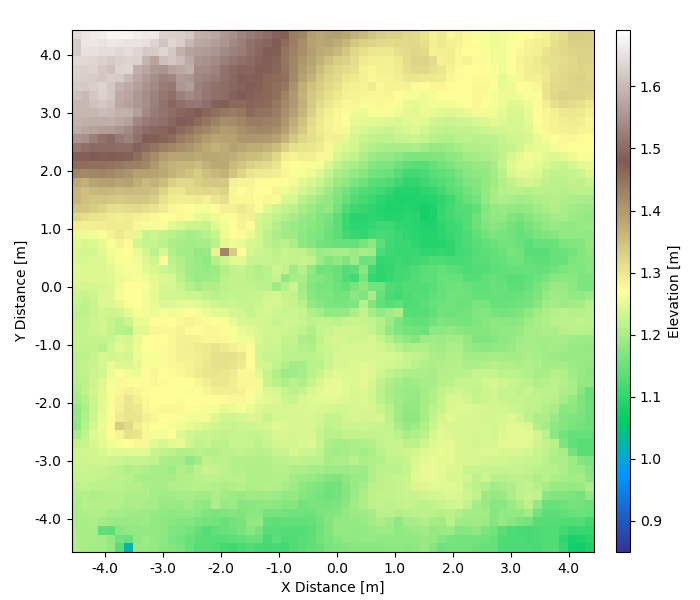

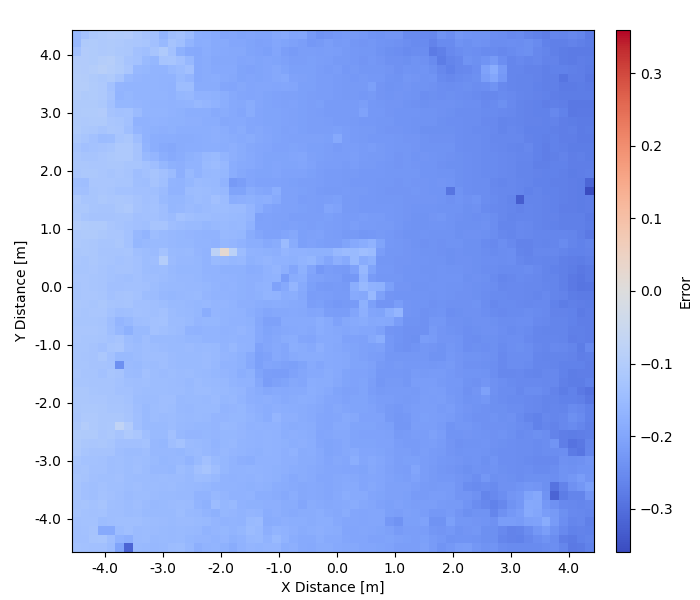

With the updated architecture, I drove the rover in simulation to collect elevation data. Below is example data from a single trial, but I did perform many such experiments. I found all produced similar data with this solution.

I found that this solution performed poorly. Also, due to the elevation errors I found, I was not able to produce a working rock occupancy grid (which was required for the competition). In turn, rock obstacles could not be detected, and a final autonomous solution was not achievable. According to competition guidelines, this data would have only had a single scoring grid cell (of 3,600 total).

Upon further inspection, it turns out a solely visual-inertial odometry solution for localization created many small elevation errors that stack up when running the rover.

Now these results may seem negative, but I was extremely happy with everything. I was happy to have followed through on a complex project and understand every bit of it. I had actually planned for ways to solve the issues I was having, but I simply needed to wrap up my progress at a stopping point in order to produce my thesis and graduate.

Thank you for reading this far. I thoroughly enjoyed working on project, and I hoped you learned something along the way. If anyone out there ever wants to discuss this work, please feel free to contact me!